Aerial Vehicles

Vision based Collaborative Localization for UAVs

Vision based Collaborative Localization for UAVs

This project focuses on presenting a collaborative localization pipeline that is applicable for two or more multirotor vehicles with a monocular camera as the only sensor required on each vehicle. Feature detection and matching are performed between the individual views, thus allowing for reconstruction of the surrounding environment which is then used for localizing the moving vehicles within a group. Communication between nearby vehicles can be performed at specific instants of time so as to refine the map as well as improve the position of the accuracy of the individual vehicles.

Thermal vision for UAVs

Thermal vision for UAVs

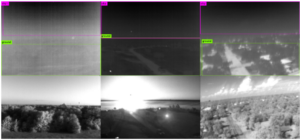

This project focuses on integrating thermal cameras for enhanced drone vision. The goal of the project is to successfully implement thermal camera vision on a drone with cheap, lightweight thermal sensors. The effectiveness of thermal vision will be studied to determine environments in which thermal data shines, after which possible applications can be explored. Pictured here is an experiment of thermal camera calibration using a one dimensional calibration object.

Sky-Ground Segmentation

Sky-Ground Segmentation

Recent works in this project include sky-ground segmentation and obstacle detection using thermal sensor information only. We have also successfully demonstrated that existing neural networks, like YOLO, can be retrained on thermal data and yield good results. Current work is focused on making autonomy possible with thermal vision exclusively. Future work will focus on cross calibration of thermal and visual data and improving autonomy robustness.

Ground Vehicles

Autonomous Shuttle

Autonomous Shuttle

Our goal is to develop and deploy Autonomous Shuttles on Texas A&M Campus and other private campuses such as hotels, golf courses etc. To this end, we are interested in developing robust localization, mapping, obstacle avoidance and control algorithms

.

Autonomous Cone Placement

Autonomous Cone Placement

The goal of this project is to develop cones that are capable of localizing and placing themselves to improve safety conditions for highway workers. These cones utilize RTK GPS and onboard filtering to produce decimeter-level accuracy in placing themselves in road conditions. Additionally, they are capable of transitioning through GPS-denied environments such as under bridges or overpasses.

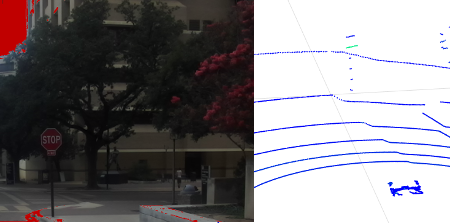

Sign Detection with LIDAR

One required ability for autonomous vehicles is to correctly identify street signs. This project investigates the feasibility of using a LIDAR sensor to detect, and classify signs for autonomous vehicles. Current popular methods for sign detection are vision based, however, in case of low visibility, a LIDAR detection method can be used instead.

1/10th scale waypoint following

1/10th scale waypoint following

This project focuses on creating a 1/10th scale platform capable of high-speed waypoint following. This will allow testing of control algorithms in a low-risk environment.

Self-driving Vehicle and Pedestrian Communication

Self-driving Vehicle and Pedestrian Communication

Drivers soon to be replaced by self-driving vehicles, and It is important to keep the communication between the vehicles and the pedestrians uninterrupted. This project aims to design a system to communicate with pedestrians visually and audibly.

Past Projects